Google Introduces PaLM To Rival OpenAI and GPT-3

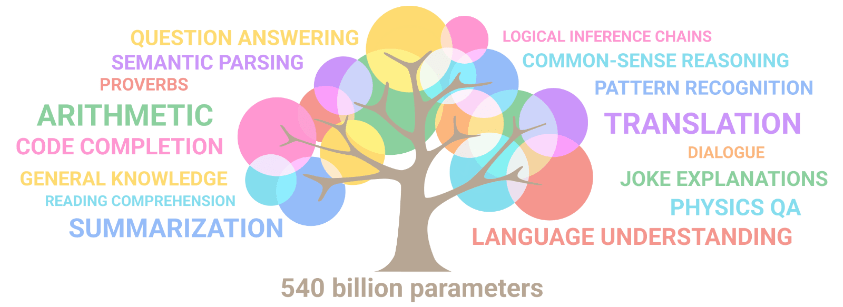

Recently, Google has released its own large language model LLM, called PaLM. PaLM is Google’s latest LLM designed to help AI applications understand and generate natural language. It is based on Google’s Transformer architecture, which is a type of deep learning model used for sequence modeling tasks such as NLP. Unlike OpenAI and GPT-3, PaLM is optimized for long-term memory tasks and has a larger number of parameters, making it better suited for more complex tasks.

PaLM consists of two components: a language encoder and a decoder. The language encoder takes raw text as input and converts it into a series of vector representations that represent the meaning of each word in the text. The decoder then takes the vector representations and generates output text based on the input. It can also be used to predict the next word in a sentence or generate entire sentences or paragraphs from scratch.

Benefits of PaLM

Using PaLM has numerous benefits for AI applications. Firstly, it can be used in a variety of tasks ranging from text summarization to question answering. It is particularly well-suited for long-term memory tasks due to its large number of parameters, which allows it to store more information than other models. Additionally, PaLM can be used to generate more accurate and human-like responses compared to OpenAI and GPT-3.

However, there are some potential drawbacks of using PaLM. For instance, it requires a large amount of data to train and can be computationally expensive. Additionally, it is not yet widely adopted by industry due to its relatively recent release.

Comparison with OpenAI and GPT-3

When compared to OpenAI and GPT-3, PaLM has several advantages. Firstly, PaLM has a larger number of parameters than both OpenAI and GPT-3, making it better suited for more complex tasks such as long-term memory tasks. Additionally, PaLM can generate more accurate and human-like responses compared to OpenAI and GPT-3. Finally, PaLM is optimized for long-term memory tasks and is better able to understand context when generating responses.

However, there are some drawbacks when compared to OpenAI and GPT-3. For instance, PaLM requires a large amount of data to train and may be computationally expensive for some applications. Additionally, it is not yet widely adopted by industry due to its relatively recent release.

Conclusion

In conclusion, Google has released its own LLM called PaLM which is designed to help AI applications understand and generate natural language. PaLM has a larger number of parameters than OpenAI and GPT-3, making it better suited for long-term memory tasks and more complex applications. It also has the potential to generate more accurate and human-like responses compared to OpenAI and GPT-3. However, it is still relatively new and may be computationally expensive for some applications. Overall, PaLM is an exciting new development in the field of Artificial Intelligence that will likely have a wide range of potential applications in the near future.

Adil Sattar is a seasoned writer, SEO expert, and technology journalist with years of hands-on experience in the digital content and IT industries. With a passion for uncovering the latest breakthroughs in technology, Adil has dedicated his career to making complex tech concepts simple, engaging, and accessible to a broad audience.

Armed with deep expertise in search engine optimization, Adil understands not just how to write great content — but how to make sure it reaches the right audience. His work spans a wide range of technology topics including artificial intelligence, cybersecurity, software development, consumer electronics, and digital innovation.

As the founder and lead writer at TechBeams, Adil has built a platform trusted by tech enthusiasts, IT professionals, and everyday readers alike. His unique blend of technical knowledge, SEO acumen, and storytelling ability sets TechBeams apart as a go-to destination for reliable and insightful tech content.

When he’s not writing or researching the next big thing in tech, Adil is constantly learning, adapting, and staying ahead of the curve in an ever-evolving digital landscape.